Blog

16.2.2021

Accelerating Towards Exascale

The path to exascale is accelerating. Tate Cantrell takes a look at the computational efficiency, innovation and GPU market competition that will help make this happen.

One of the most important advancements that we see in computing today is the continued march forward of computational efficiency. There is no better stage for monitoring these advancements than the Green500. The Green500 is a biannual ranking of the TOP500 supercomputers using the efficiency metric of performance per watt. Performance is measured using the TOP500 measure of high performance LINPACK benchmarks at double-precision floating-point (FP64) format. Unofficially tracked since 2009 and officially listed since 2013, the Green500 metrics show the trajectory for the advancement of computational efficiency in supercomputers.

If we look at the top 10 performers on the Green500 list and average their performance, the chart shows that the industry’s best are increasing computational efficiency at a compound annual growth rate in excess of 40% (See Figure 1).

Figure 1 – A plot of the efficiency of the Top 10 Green500 machines

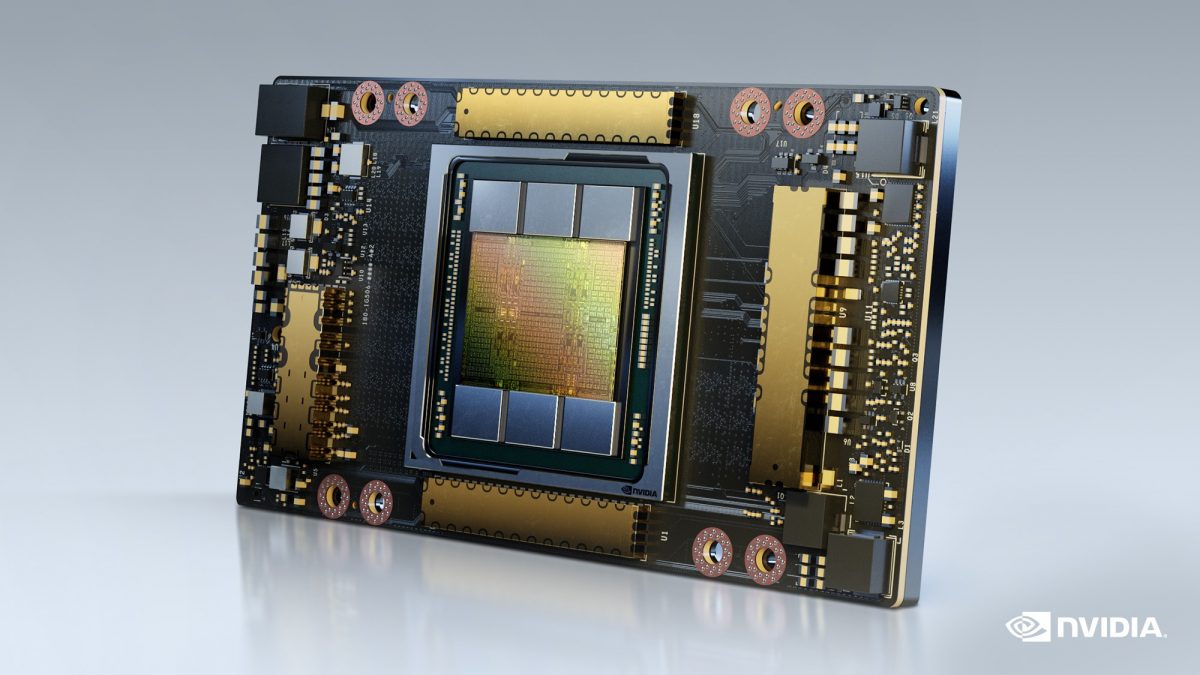

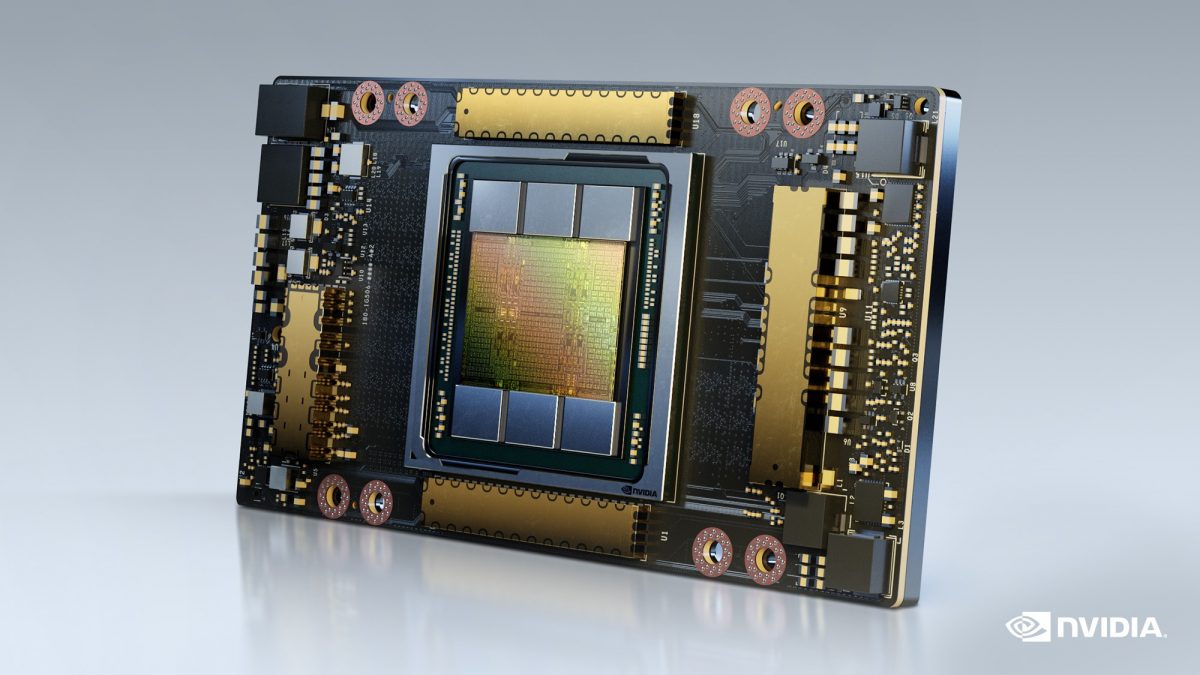

In recent years, the growth in efficiency has been driven nearly completely by the adoption of accelerators into the design of the world’s most powerful supercomputers. In fact, the top spot on the Green500 list announced in November, 2020 at the world renowned Supercomputing SC20 conference was none other than an NVIDIA DGX SuperPOD sporting NVIDIA’s latest DGX A100 infrastructure with over 26 Gigaflops of computing for every watt of power consumed. And as anyone in the industry knows, NVIDIA is leading the way forward with accelerating CPU’s with the GPU chips and SDK’s (Software Development Kits) to allow industries to optimise their most critical applications.

At SC20, NVIDIA was far from a side show. Four out of the top five Green500 machines sported the new NVIDIA A100 GPU as an accelerator, and of the top 40 systems in the Green500, all but three leveraged accelerators to achieve their benchmarks. We should expect this trend to continue. And not just from NVIDIA. During the SC20 event this year, AMD announced its MI100 accelerator which boasts 11.5 teraflops of peak double-precision floating point performance. Assuming the chip is running at its max power rating of 300 watts, that’s over 38 gigaflops of computing performance per watt of power. It will be interesting to see what systems are introduced in ISC 21 and how the trends continue.

But a question that comes to mind is – why? Why are GPU’s suddenly being invoked for speeding up supercomputers that are competing on the ability to solve FP64 double precision calculations? I think the answer is very simple: Because they can.

Since NVIDIA first released its CUDA libraries in 2007, software developers have been empowered to use languages like C++ and FORTRAN that directly call on the resources of the GPU. With the 2017 release of the V100 architecture, NVIDIA built upon the CUDA cores to create tightly orchestrated computational units that allow for simultaneous full matrix arithmetic on each clock cycle. These computational units were given the name Tensor Cores and they have become the defacto standard in AI Hardware Acceleration. NVIDIA produced SDK libraries that allowed developers to invoke Tensor Cores without making any changes to their existing programs. By taking control of the hardware, its orchestration, and by providing functional libraries to use the hardware in the most efficient manner, NVIDIA was laying the groundwork for knocking it out of the park when the competition was computational efficiency.

In the V100 architecture, Tensor Cores were mixed precision varietals that leveraged FP16 and FP32 registers to create lightning fast AI matrix calculations with a slight reduction in accuracy that did not cause trouble for the vast majority of the AI software invoking these speed-ups. However, 32-bit numerics would not be acceptable when working with precision HPC codes and most specifically, these speed-ups would not be applicable to the LINPACK benchmarking code that is at the core of the TOP500 ratings. But, with the release of the NVIDIA A100 architecture earlier this year, NVIDIA not only gave coders the ability to access 64-bit registers, it also coordinated the hardware so that developers could have Tensor Core units dedicated to FP64 applications. So now, whether an application was working to better understand complex molecules for drug discovery, probing the limits of physics models for potential sources of energy, or modeling atmospheric patterns to better predict and prepare for extreme weather, the NVIDIA A100 architecture can run full precision FP64 arithmetic in parallel using FP64 enabled Tensor Cores. As a result, what was once the accelerator is now the main operator in the simulation. The GPU is no longer just a coprocessor; the GPU is the supercomputer.

In the age of convergence, the purveyors of hardware need to add value. NVIDIA has added the most value by taking a computational piece of hardware – its GPU – and wrapping it with a beautifully orchestrated set of software libraries that reduce the amount of effort required to leverage the computational efficiencies offered. But not only that, with the advent of its newest architectures, the GPU has moved beyond rendering and even beyond artificial intelligence to take on the highest precision, most complex simulation codes out there. NVIDIA’s approach seems to be to offer a piece of hardware that is able to unify HPC and AI convergence into a single platform. Whether the code is simulating molecular dynamics of life saving drugs or leveraging machine learning to limit the universe of input conditions to be applied to those simulations, it is very likely that these codes will benefit from the acceleration tools presented by Tensor Cores. But back to the value discussion – is the NVIDIA DGX A100 price tag justified by the flexibility to have one golden device that can spread the field from state of the art artificial intelligence models to ultra precise scientific codes.

A question remains – will a unified approach to high performance computing keep NVIDIA at the top of the stack when it comes to computational output per unit of power? NVIDIA will see challenges from the field. For example, Graphcore is laser focusing on AI acceleration with their Intelligence Processing Unit or IPU. And while their focus on speeding up sparse matrix arithmetic won’t land them in the top of the FP64 processing competition, Graphcore has shown impressive results on specific codes such as those leveraging Natural Language Processing or Markov Chain Monte Carlo Probabilistic Models. There’s also Tenstorrent, a Toronto based startup focused solely on the speedup of machine learning models. And this hardware company has attracted some serious talent with the addition of Jim Keller, who is one of the most respected processor architects in the industry. And while Intel may have been slow out of the gate, Ponte Vecchio will come and with the recent announcement of the die with 8,192 cores, there will be a certain disruption to the market that NVIDIA has well covered at the moment.

Finishing where we started, what does this all mean for the fastest supercomputers in the world? Here’s a quick list of what to look out for:

- GPU’s, specifically those built by NVIDIA for now, will lead the way forward in the most efficient supercomputers

- As new architectures emerge, the HPC community will continue to debate the future of the LINPACK benchmark. It isn’t a new discussion.

- The push to Exascale will march forward and we shouldn’t be surprised to see a temporary drop in efficiency as the US, Europe, Japan and China all push for Exascale dominance

- With the myriad of accelerators coming to market, we should see an interesting shift in the next few years away from a chip technology chart that is pure Intel. Competition is a good thing.

To learn more, join me at NVIDIA GTC 2021 where I’ll be discussing this and more during my video presentation, “Sustainability, Support, Scale – Preparing the DGX-Ready Data Center”. Registration for the event is now open.