Insights

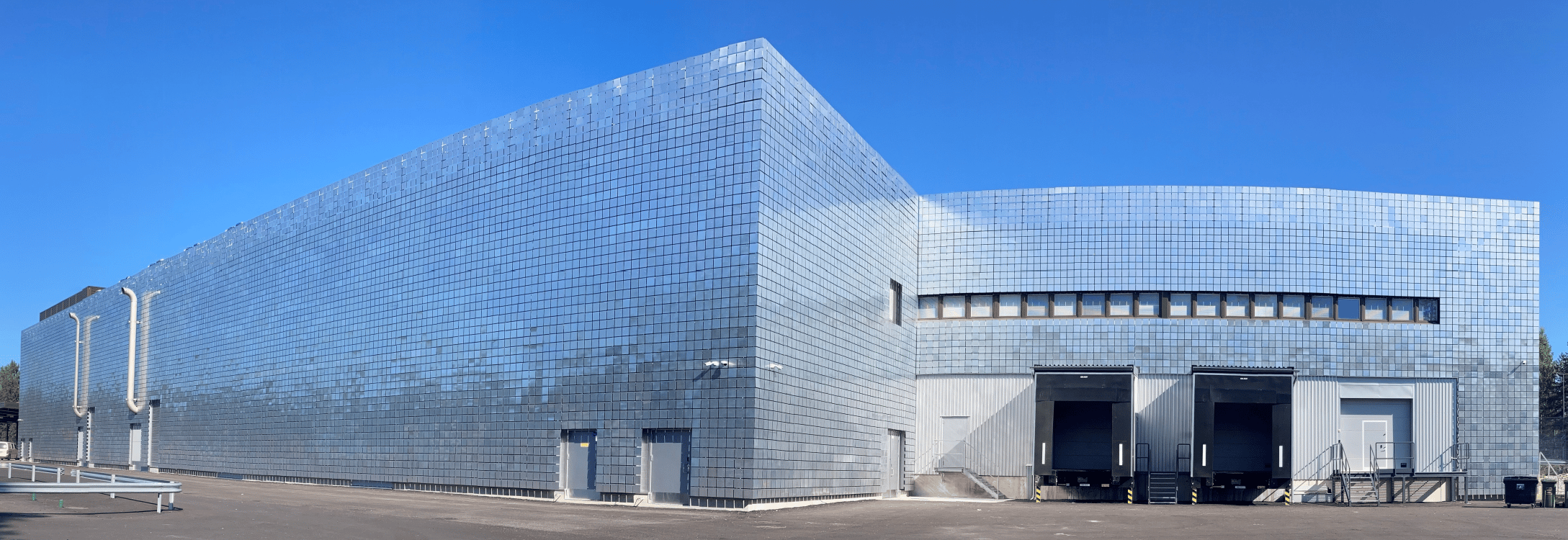

Our ground-breaking innovation is making a big impact. Find out more with the latest news, blogs and resources available from Verne.

Latest News

Blogs

Newsletter sign up

Subscribe to our Newsletter

Ready to start your journey to sustainability? Complete the form and get the latest from Verne straight to your inbox, every quarter.